At work I’ve been instructed to get MySQL working in the cloud. I scoffed, whined and asked “Do we really need SQL?”. The Peanuts Gang teacher explained why and I didn’t really follow but I think I heard the words “business needs” and “blah blah blah”. If I had my druthers I’d ditch SQL for a key/value store but I digress.

I tend to prefer GCP over AWS because it’s the better infrastructure for containers imo. My understanding is the AWS MySQL solution is probably better but I’m not familiar with it so this is not a compare/contrast with AWS RDS/Aurora. I took Google Cloud SQL 2nd generation beta for a test drive with a focus on minimizing Ops, maximizing reliability and flexible replication and here’s what I found.

TL;DR Pros and Cons

GCP Hosted MySQL 2nd Generation beta has some great features but there are important MySQL incompatibilities to be aware of.

Pros

- Easy setup

- Automatic backups

- Fast and easy read replication within region

- Easy failover* within region

- Online ssd or disk storage size increase (decrease not available)

- IPv6

failover*: server only fails over if the entire GCP zone is down. Failures of smaller magnitude can still occur without triggering a failover. This could be a pro or con depending on the failure type.

Cons

- Read replicas not possible between regions

- Replicas with non-google-hosted masters or slaves not possible

- No private IP (so no Google Load Balancer)

- Downtime required for occasional maintenance

- Downtime for changing cpu or memory

- No custom server TLS cert

- No way to force TLS and not force client certs

- No Roadmap

Cons (in depth)

The GCP Cloud SQL Documentation does a good job at explaining the pros, so you can read about those there. They do not do a good job at explaining the cons, so I’m sure their marketing people are patting themselves on the back oblivious to the engineer spittle.

Impossible External Slave/Master

I tried two approaches to this: all inside of GCP and hybrid in/out.

Impossible Inter-Regional Replication, aka: hosted slave incompatible with MySQL

First, the Web UI does not allow you to configure a replica outside of the region of the master. I’d assume that the API call would barf if you tried the same, though I didn’t try.

So I created a SQL instance in the US and another one in Europe, both as hosted instances. After some simple configuration, I logged into the “slave” instance and issued the CHANGE MASTER mysql command which failed:

Access denied; you need (at least one of) the SUPER privilege(s) for this operation

And as is documented in the FAQ, the Google fork of MySQL has SUPER privileges stripped out. So you just can’t manually configure a hosted instance to be a MySQL compatible slave, period.

Impossible Hybrid Hosted/Non-Hosted Replication, aka: hosted master incompatible with MySQL

So what about an external MySQL slave and a GCP hosted master? So I created a test mysql server like so:

docker run -p 1234:3306 --name mysql-slave --rm -e MYSQL_ROOT_PASSWORD=password mysql --server-id=1234

And then run the mysql commands:

DROP DATABASE performance_schema;

DROP DATABASE mysql;

CHANGE MASTER TO MASTER_HOST='w.x.y.z';

start slave USER='user' PASSWORD='password';

And we get error:

2016-06-09T23:18:35.741239Z 4 [ERROR] Slave SQL for channel '': Error 'You have an error in your SQL syntax; check the manual that corresponds to your MySQL server version for the right syntax to use near 'CERTIFICATES' at line 1' on query. Default database: ''. Query: 'FLUSH CERTIFICATES', Error_code: 1064

2016-06-09T23:18:35.741360Z 4 [Warning] Slave: You have an error in your SQL syntax; check the manual that corresponds to your MySQL server version for the right syntax to use near 'CERTIFICATES' at line 1 Error_code: 1064

FLUSH CERTIFICATES is not a MySQL command, so we’ve clearly run into another incompatibility between Google’s fork of MySQL and MySQL proper.

No Private IP

GCP has a really nice SDN (software defined network). By default your entire project shares a private ipv4 network no matter which part of the world your VMs are located in. So normally I can communicate between the US and Europe within the rfc1918 network. And each host normally gets a /24 private IP range which is really useful for e.g. kubernetes.

So it’s confounding to discover that Google Hosted SQL instances only have a WAN IP. This means you can’t use a google load balancer in front of your hosted read replicas because those will only point at private IPs (or something like this, I don’t know how LB routing works behind the scenes).

This also means that for application connections you have to use one or more of:

- IP Whitelisting (hint: don’t pick only this one)

- Credentials

- Client Certificates

My recommendation is that if you don’t force client certificates, you at least ensure that your clients are enforcing TLS. With strong passwords you might be able to get away with whitelisting 0.0.0.0/0. Note that you can require TLS for users with:

CREATE USER foobazzer IDENTIFIED BY 'password here' REQUIRE SSL;

The workaround that GCP offers is by helping you setup a MySQL proxy within your private network. This is an okay workaround but has two major drawbacks:

- More Ops (more operations work)

- Less Performance

Hidden Roadmap

Since secrecy seems to be a cloud business strategy, we customers have no idea which of these cons might be fixed or mitigated in the GA (general availability) release. We don’t even know if there is going to be a GA release. Being unable to plan - to know which constraints are permanent and which you only need to mitigate for a short time - is really obnoxious.

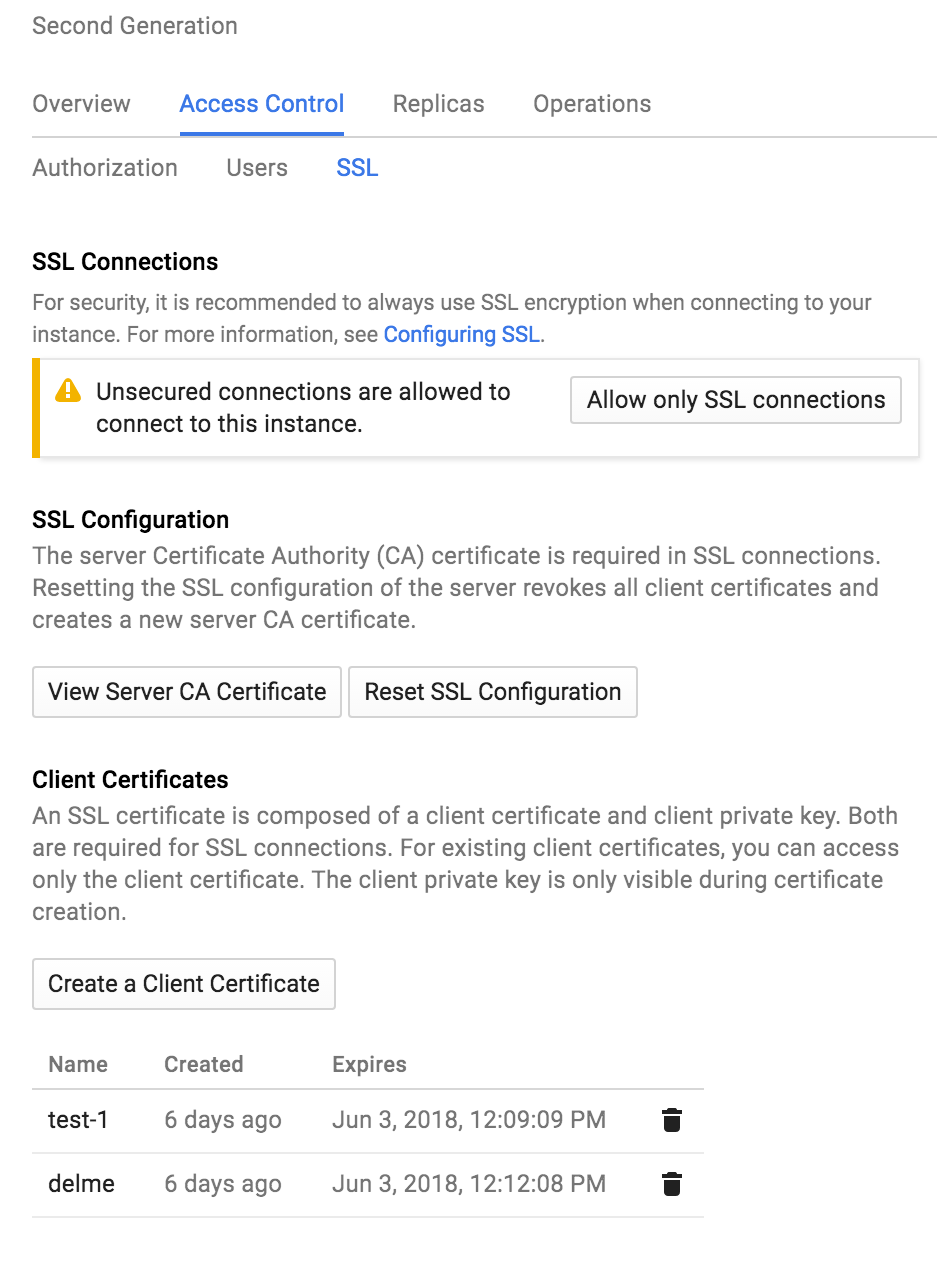

TLS Oddities

No Custom TLS Certs

I did not see any way to upload your own TLS cert. This means that you cannot use the mysql client option --ssl-verify-server-cert

Enforcing TLS or Client Certs?

This Web UI below confuses the TLS options.

There is no way via the UI to require TLS connections, but not require client certificates.

As mentioned above, to require TLS without client certs you can require SSL when you create the user:

CREATE USER foobazzer IDENTIFIED BY 'password here' REQUIRE SSL;

Sha1?

This is the TLS cert generated automatically by instantiation:

Certificate:

Data:

Version: 3 (0x2)

Serial Number: 0 (0x0)

Signature Algorithm: sha1WithRSAEncryption

Issuer: CN=Google Cloud SQL Server CA, O=Google, Inc, C=US

Validity

Not Before: Jun 3 18:49:47 2016 GMT

Not After : Jun 3 18:50:47 2018 GMT

Subject: CN=Google Cloud SQL Server CA, O=Google, Inc, C=US

Subject Public Key Info:

Public Key Algorithm: rsaEncryption

Public-Key: (2048 bit)

Modulus:

00:89:9a:0e:df:d2:6e:6d:a3:5e:76:22:88:67:56:

7f:7f:c8:41:99:48:92:30:b3:d2:88:68:0e:d5:bd:

99:19:5d:37:6f:07:72:a6:65:b3:f1:fb:01:51:67:

56:46:0d:ac:ab:f2:4d:1b:1b:8c:16:34:5e:38:4e:

da:33:04:c4:c2:9a:3f:5c:ce:24:af:ba:29:55:8f:

28:29:20:d1:26:41:3b:99:bc:19:bf:b0:e5:ea:7d:

e0:fd:a5:f0:c8:4d:40:47:4d:73:4c:d4:05:aa:c7:

ff:18:cd:a0:72:d6:1d:a5:c3:d0:f4:10:03:b5:17:

2a:4e:fe:5b:3d:a5:7d:e5:68:ea:be:26:02:a7:8f:

61:f9:0c:04:ca:a1:78:09:b1:b2:2c:5c:eb:92:3a:

85:fa:25:ef:4b:bd:79:fe:e6:86:c5:20:23:3d:a6:

48:f9:62:14:1d:1d:de:a8:86:60:86:55:1a:fd:91:

04:a9:2c:c2:06:c4:fb:0c:24:12:89:2d:bb:2b:4a:

32:cd:b6:60:8a:4e:c8:2c:46:9b:00:9d:ca:83:22:

5f:c1:57:12:2f:20:30:11:84:8f:90:4e:a0:a1:7d:

1d:5f:0b:79:98:65:2d:38:74:36:4e:85:6f:e4:8c:

86:29:93:9b:89:7c:47:10:67:a8:cb:9d:f0:67:b5:

64:a1

Exponent: 65537 (0x10001)

X509v3 extensions:

X509v3 Basic Constraints: critical

CA:TRUE, pathlen:0

Signature Algorithm: sha1WithRSAEncryption

6f:a6:f3:7e:03:33:ad:e7:cf:26:ee:22:97:de:cf:8f:53:af:

6d:7d:6a:bb:65:ba:93:eb:cc:7b:12:81:1b:8e:ce:17:5c:e9:

a2:e9:a1:a3:53:4b:2b:59:f8:1c:1b:a0:9b:a3:50:68:bf:aa:

c5:43:d2:8d:2d:80:15:9e:96:86:8e:93:a1:54:d2:20:07:6e:

76:1c:7d:c5:79:17:a9:5b:9a:4e:d4:d8:6b:ec:d2:06:e4:dc:

9f:98:fb:d3:9e:4b:41:5e:f5:30:50:7c:48:21:96:1a:9a:ed:

2f:b9:4d:c1:ff:59:48:8a:10:41:6e:f7:56:38:3f:41:7e:ae:

ab:9b:be:83:52:3f:fa:e1:33:e8:0a:b9:6a:ec:c7:16:b6:a1:

40:a6:5b:06:56:3d:3f:52:96:29:36:24:89:aa:8d:80:ba:24:

92:cd:66:44:64:89:30:9c:c3:4e:45:c7:df:4a:07:90:f4:d3:

eb:fb:0c:50:88:6f:a5:97:87:75:ac:4e:62:f0:d5:b1:33:3d:

ed:0c:bd:c7:a4:71:8d:eb:7d:8b:af:87:64:63:46:c6:28:72:

4f:13:59:ef:5c:8b:42:09:50:ff:84:48:7b:4e:c4:b5:23:1d:

b9:b7:34:40:fa:61:8a:af:da:8b:93:0a:ab:82:e7:46:14:61:

a2:d1:e2:09

notice anything strange? GCP is signing these certs with old sha1. AFAIK, this is not actually important in this case because these TLS certificates can only be used for encryption and cannot be used for server verification anyway. Still, it’s the sort of thing that might make you double take and pause to think over.

Downtime for Changing CPU/Memory

On a smaller instance with not much data on it, I changed the machine size while querying the instance. It stayed up for about a minute while, I assume, it brought up a newly sized vm. Then the machine did not return queries for about 2.5 minutes, I assume while syncing, before coming back up.

I might just be wanting a pony here, but it would be outstanding if this didn’t require any downtime. The time when you’re most likely going to care about scaling vertically is when you’re handling a larger amount of queries than usual and hence when downtime is the most painful.

Downtime for Required Maintenance

You cannot control if your instance will go down for maintenance. This means that your application(s) must tolerate some downtime.

At the moment, you may select the day of week and hour of day to restrict maintenance to: e.g. Sunday between 2 - 3 AM local time.

Downtime Tolerance

For many reasons you should write your applications to be tolerant of downtime. Queueing writes while fanning out reads is a really good scaling/tolerance strategy.

But if your application was not constructed this way, GCP hosted SQL may not be a good fit for you.

Slightly Broken Web UI

A couple bits of their Web UI was broken when I tested.

The “Download server-ca.pem” link would download an empty file. The only way to download the cert was to first create a client key and download it from that popup.

The menu item “Access Control -> Users” would yield “Failed to load”. It’s not possible to see the users on the SQL box via the Web UI.

This is the sort of thing might be obnoxious if you’re not skilled at using their API or CLI.

Conclusion

The pros of GCP Hosted SQL are pretty impressive and the cons can hurt if you’re not prepared.

If you do not have sophisticated replication needs, this 2nd generation beta makes managing SQL easy. But this just won’t work for non-trivial topologies.

Comments

comments powered by Disqus